Rights and Wrongs - Can Machines Override Human Judgment on Air Safety?

28 March 2019

Over the years, air travel has become remarkably safe – in 1977, four out of one million flights met with accidents; today, the number of flights has grown exponentially, and the accident rate has fallen to 0.4 out of a million. Air travel is safer than most other modes of transport. Many more people die in road accidents each year than in air crashes.

Initial accounts reveal practices that are deeply troubling for their potential impacts on passenger safety.

And yet, trust in air safety is now shaken. Two crashes within five months involving a modern aeroplane – the Boeing 737 Max – have raised serious concerns. Investigators are examining evidence from the two crashes – in Indonesia in October (in which 189 died) and in Ethiopia in March (in which 157 died) – and before long we will know what happened. Initial accounts reveal practices that are deeply troubling for their potential impacts on passenger safety, and underscore the need for more rigorous due diligence.

The first concerns the process of developing and operating new planes and pressures to cut corners. True, accidents, including freak occurrences, can occur. Equipment can malfunction. But most journeys are expected to be uneventful precisely because of the magnificent choreography of pilots, air traffic controllers, mechanics, designers, and many others.

Designing, developing, and manufacturing new aeroplanes takes time, and competitive pressures compel companies to speed up the process.

Designing, developing, and manufacturing new aeroplanes takes time, and competitive pressures compel companies to speed up the process. Competition also forces downward pressure on costs, which means manufacturers offer no-frills planes, adding features and charging more for them, and seek ways to keep adding earnings over time. That’s fine with what are genuine frills, such as entertainment equipment on board, but not so if it involves upgrading safety features. According to a New York Times report, Ethiopian and Lion Air, which suffered the recent crashes, had not installed optional updates for which Boeing was charging extra. To be sure, the updates were not required by regulators, and many other airlines have not paid for those updates.

Ethiopian and Lion Air had not installed optional updates for which Boeing was charging extra.

Boeing’s business strategy compels it to cut costs because rival Airbus has been making rapid in-roads. Boeing decided to upgrade its existing aircraft – 737 – as a quicker option than developing a new plane, which would have taken another decade to receive regulatory approvals. An enhanced 737 requires fewer regulatory approvals, the reasoning went. Boeing also benefited from a US fast-track approval process in operation since 2005. Initial reports suggest the 737 Max crashes occurred because a new automated anti-stall system (known as MCAS) possibly functioned in unanticipated ways. The aircraft, all 371 of which are now grounded worldwide, has 5,000 orders worth $600 billion. This has implications beyond Boeing, and could affect the US economy.

Commercial exigencies should not prevail over safety concerns.

Commercial exigencies should not prevail over safety concerns. And while it is too early to tell if deregulation led to procedural short-cuts that allowed 737 Max to start flying commercially earlier, the fact is that the US Federal Aviation Administration (FAA) permits Boeing to self-regulate certain aspects of its manufacturing, as the Wall Street Journal reported. This meant qualified, FAA-trained Boeing employees monitor and evaluate certain production processes and certify to make the plane airworthy. Without questioning the integrity of the individuals involved, this is problematic at many levels; in particular, it raises the second major problem – of conflict of interest.

If individuals certifying aircrafts are also employed by the manufacturer, they will inevitably face dilemmas. There is no off-the-shelf ‘right’ answer when such arrangements are in place, but this raises many questions the company has to ask constantly. That is why an independent authority – public or private – should be certifying crucial manufacturing processes.

The other conflict is more critical – of regulatory capture, although the US is not the only country affected by it. Boeing has 30 in-house lobbyists and 16 outside firms representing its interests over tax laws, defence contracts, and other regulatory measures, including safety. The Wall Street Journal reports, “Federal lobbying records show that more than 10 of Boeing’s government-affairs staff lobbied the FAA last year on the issue of certification as the company worked to bring new airplane models to market.” In 2018, Boeing spent $15.1 million on lobbying, which made it the fourth-largest lobbyist among companies, according to the Centre for Responsive Politics. Lobbying is not illegal, nor should it be. It has to be transparent.

The state’s duty to protect, in this case, implies ensuring that its regulation is fit for the purpose of ensuring safety.

It is a matter of concern where the US Congressman who chairs the sub-committee that oversees the FAA represents a district in Washington State where Boeing is a large employer. The industry and some politicians have applied pressure on the FAA to make safety reviews quicker and less costly while ensuring safety. This ought to raise questions because it can lead to regulatory decisions that can have adverse impacts on human rights, including the right to life, safety, security, and correspondingly, the company’s duty of care to its employees and its customers. US lawmakers are concerned. Several are focusing on the Organization Designation Authorization programme, under which the FAA delegates some aspects of safety certification to the manufacturer. Senator Richard Blumenthal says the programme leaves “the fox guarding the henhouse.” Congressional hearings starting this week will be an opportunity for lawmakers to examine the issues in detail, in particular the self-audits. This goes to the heart of the UN’s Protect-Respect-Remedy framework for business and human rights. The state’s duty to protect, in this case, implies ensuring that its regulation is fit for the purpose of ensuring safety. The corporate responsibility to respect human rights comes into play because the company has the responsibility to conduct due diligence to minimize risks to human rights, and it must change practices that may have allowed any short-cuts in its own audits and be willing to accept external examination. And lawmakers must develop an adequate remedy that prevents the recurrence of procedures or practices that permit abuses.

The third problem goes beyond politics and concerns our faith in technology. Human error can cloud sound judgment; technology is meant to make it easier to avoid mistakes. An air crash is often a combination of mechanical and human error, and to eliminate the latter, manufacturers have attempted to create fail-safe technological solutions. But no technology is perfect, and there are always margins of error.

Humans are fallible, which is why technology takes over some repetitive and tedious tasks, so pilots are free to respond to unusual situations and the probability of making an error is reduced. While Boeing’s philosophy relies on empowering the pilot, Airbus has focused on getting the technology right.

This is not the place to comment on which approach is superior, nor am I qualified to draw any conclusions. But it is fair to say that excessive reliance on technology can pose serious problems – not only in nightmarish sci-fi scenarios like in the Arthur C Clarke story (which Stanley Kubrick made into the mesmerising film, 2001: A Space Odyssey), where the supercomputer HAL takes over the operations of the space mission), but in more humdrum matters.

Can artificial intelligence be relied upon to make more rational choices always?

This goes beyond aviation: more and more industries are exploring the possibility of machine-learning replacing human intelligence – driverless trains, trucks and cars, for example. Can artificial intelligence be relied upon to make more rational choices always? Binary codes follow outwardly rational approaches, relying on “if-then” scenarios, or choosing between several options to arrive at specific outcomes based on algorithms developed by the engineers. Its effect on human rights can pose problems that philosophers have found hard to grapple with, such as in the moral dilemma posed by the runaway trolley. Human intelligence is critical, guided by thinking that takes into account human rights impacts, so that decisions made do not have adverse consequences. (Should it be a machine that decides whether an aircraft with 250 passengers running short of fuel should make an emergency landing on water or in a densely-populated area, with casualties certain in both scenarios?)

In other words, relying on technology to anticipate and mitigate risks is limited by the ability of that technology to respond to every risk in a manner that is consistent with human rights protection as the overriding objective. We simply don’t understand the risks of automated aeroplanes, as veteran pilot Jeff Wise wrote recently; and that means we can’t let our judgment be outsourced to a machine. While all risks cannot be eliminated, digging deeper to understand the potential impacts of each decision on people is the very least we should expect of companies. That should be Boeing’s focus now.

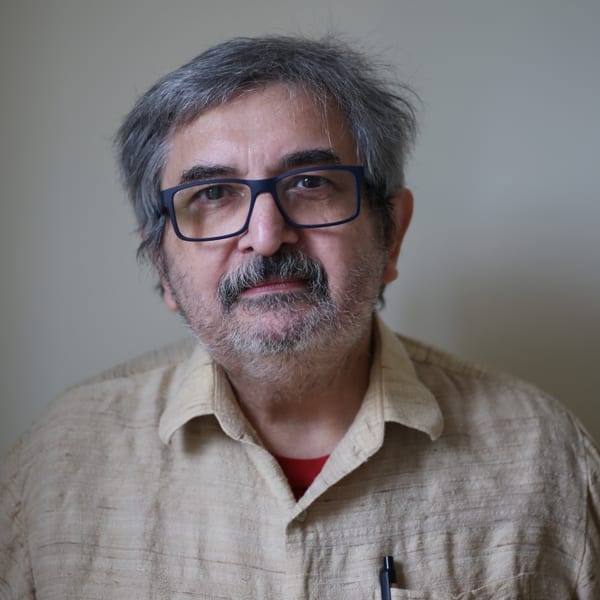

Photo: Christine Alalo, who died in the Ethiopian plane crash (Credit Flickr/AMISON)